Summary measures of agreement and association between many raters' ordinal classifications - ScienceDirect

Inter-rater reliability of a national acute stroke register – topic of research paper in Clinical medicine. Download scholarly article PDF and read for free on CyberLeninka open science hub.

Inter-observer agreement and reliability assessment for observational studies of clinical work - ScienceDirect

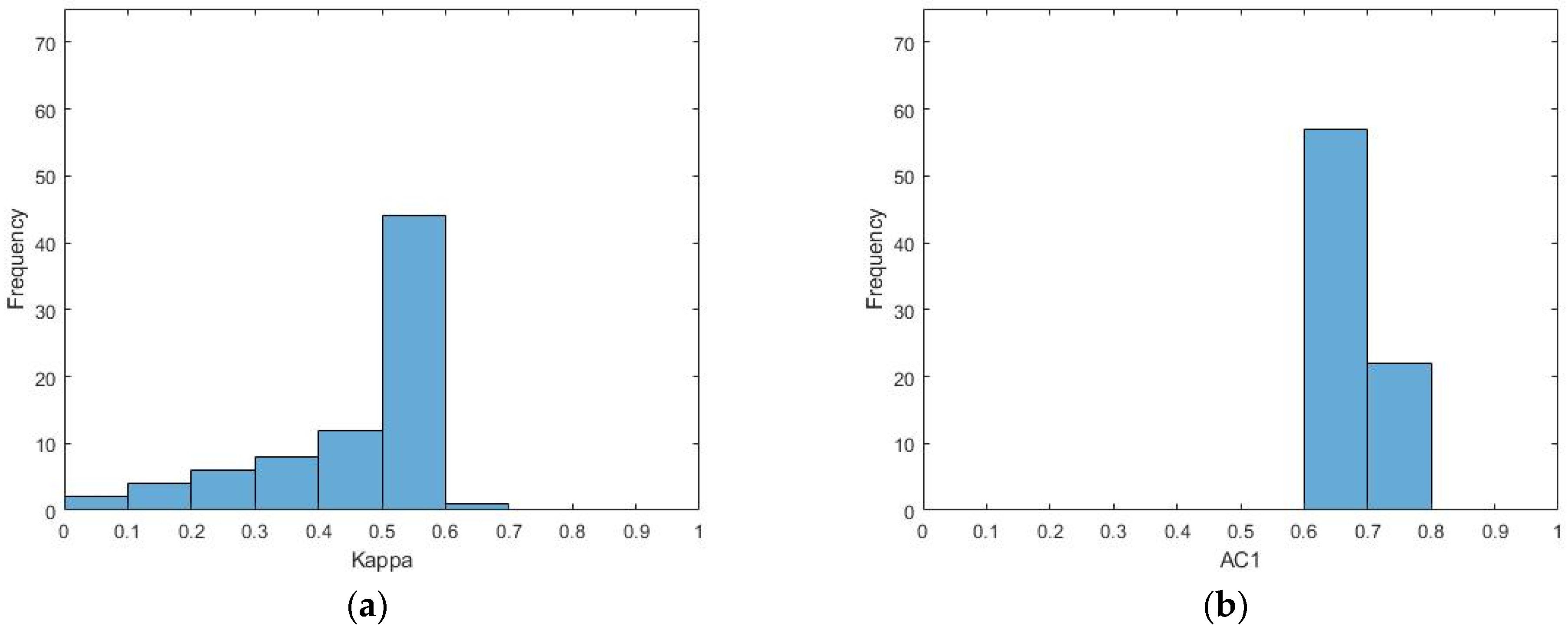

Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter-Rater Agreement of Binary Outcomes and Multiple Raters

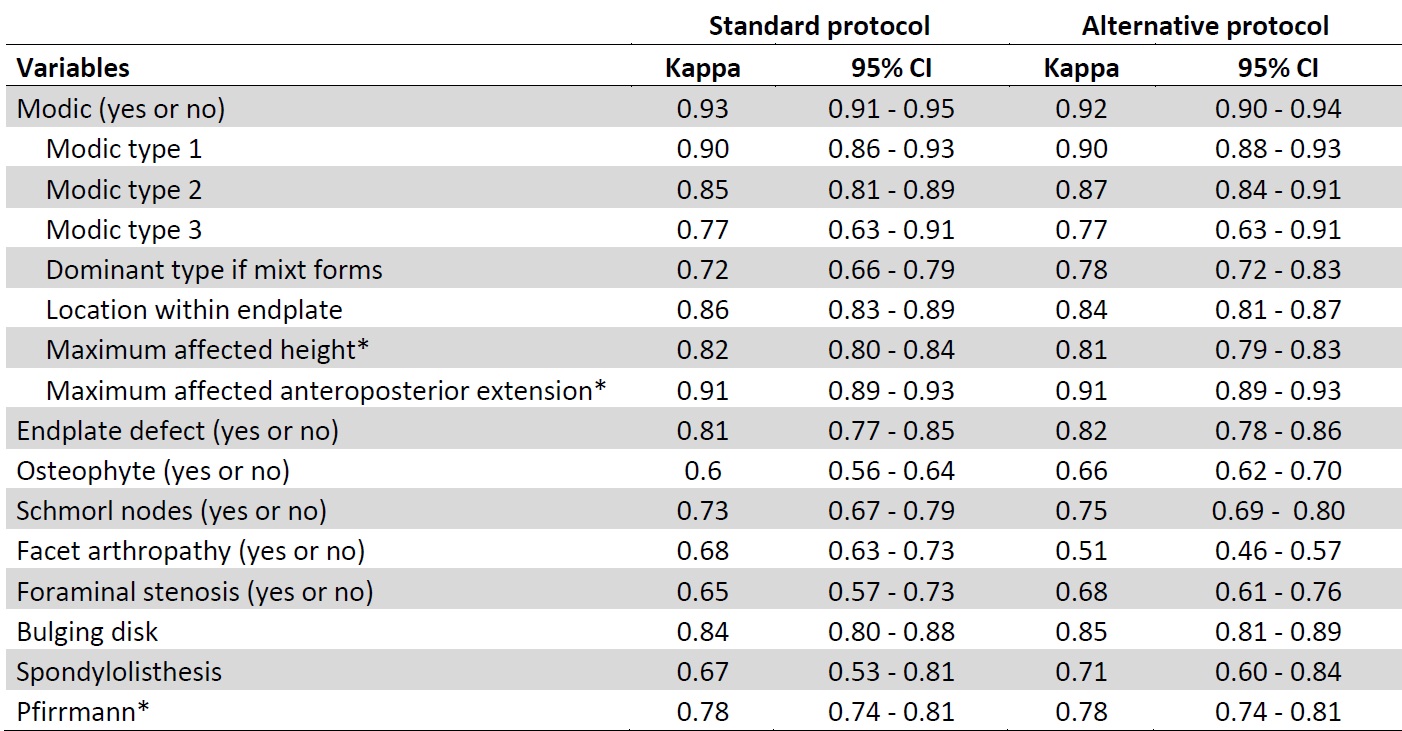

The results of the weighted Kappa statistics between pairs of observers | Download Scientific Diagram